We tried helping billionaires and it didn't work.

Lemmy Shitpost

Welcome to Lemmy Shitpost. Here you can shitpost to your hearts content.

Anything and everything goes. Memes, Jokes, Vents and Banter. Though we still have to comply with lemmy.world instance rules. So behave!

Rules:

1. Be Respectful

Refrain from using harmful language pertaining to a protected characteristic: e.g. race, gender, sexuality, disability or religion.

Refrain from being argumentative when responding or commenting to posts/replies. Personal attacks are not welcome here.

...

2. No Illegal Content

Content that violates the law. Any post/comment found to be in breach of common law will be removed and given to the authorities if required.

That means:

-No promoting violence/threats against any individuals

-No CSA content or Revenge Porn

-No sharing private/personal information (Doxxing)

...

3. No Spam

Posting the same post, no matter the intent is against the rules.

-If you have posted content, please refrain from re-posting said content within this community.

-Do not spam posts with intent to harass, annoy, bully, advertise, scam or harm this community.

-No posting Scams/Advertisements/Phishing Links/IP Grabbers

-No Bots, Bots will be banned from the community.

...

4. No Porn/Explicit

Content

-Do not post explicit content. Lemmy.World is not the instance for NSFW content.

-Do not post Gore or Shock Content.

...

5. No Enciting Harassment,

Brigading, Doxxing or Witch Hunts

-Do not Brigade other Communities

-No calls to action against other communities/users within Lemmy or outside of Lemmy.

-No Witch Hunts against users/communities.

-No content that harasses members within or outside of the community.

...

6. NSFW should be behind NSFW tags.

-Content that is NSFW should be behind NSFW tags.

-Content that might be distressing should be kept behind NSFW tags.

...

If you see content that is a breach of the rules, please flag and report the comment and a moderator will take action where they can.

Also check out:

Partnered Communities:

1.Memes

10.LinuxMemes (Linux themed memes)

Reach out to

All communities included on the sidebar are to be made in compliance with the instance rules. Striker

Tbf im not sure how much it helps them if you’re using the LLM without an account

Market share. They can show the usage figures to investors and ask for more cash

Not if you run it locally!

So what’s the ideal setup then?

I'm not sure what you mean by ideal. Like, run any model you ever wanted? Probably the latest ai nvidia chips.

But you can get away with a lot less for smaller models. I have the amd mid range card from 4 years ago (i forget the model at the top of my head) and can run text, 8B sized, models without issue.

I’m sorry, I use chatgpt for writing mysql queries and dax-formulas so that would be the use case.

You'd have to go looking for a specific model but https://huggingface.co/ has a model for nearly anything you'd want. You just have to setup your local machine to run it.

A Relatively recent gaming-type setup with local-ai or llama.cpp is what I'd recommend.

I do most of my AI stuff with an rtx3070, but I also have a ryzen 7 3800x with 64gb RAM for heavy models where I don't so much care how long it takes but need the high parameter count for whatever reason, for example MoE and agentic behavior.

Can't see see that saying without this starting to jam out in my head.

Came in here to post this

BTW, I highly recommend checking out his other channels if you haven't already. They're all great

I didn't know I needed this in my life until now. Thank you!

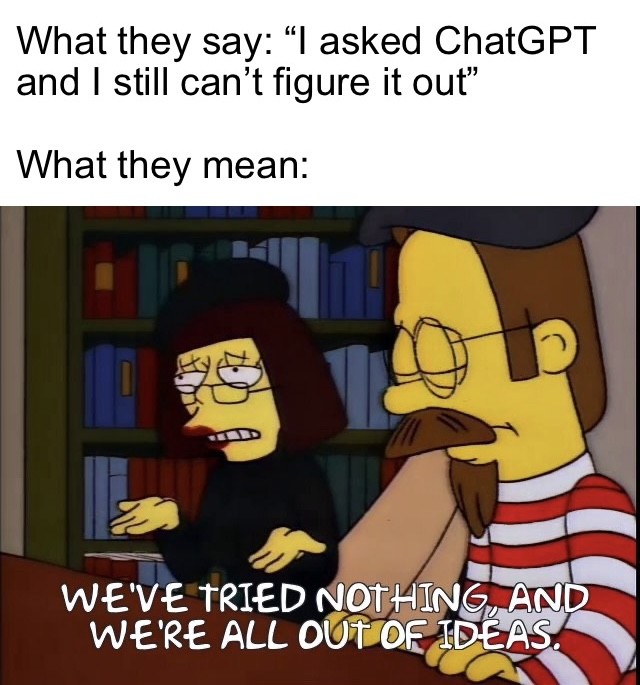

It's super annoying when someone posts in a forum "I asked ChatGPT and it said blah blah blah, yadda yadda yadda..." to a post asking a question to the community.

Like, if anyone gave a shit what ChatGPT thought, we could ask it ourselves. We don't need a middle-man.