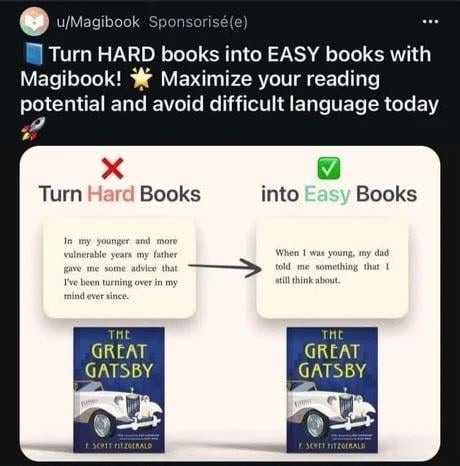

So this is apparently something AI companies now think is smart to advertise with. Don’t know who’d willingly consider this something targeted at them, but here we are.

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community

So this is apparently something AI companies now think is smart to advertise with. Don’t know who’d willingly consider this something targeted at them, but here we are.

I rewrote the ad so they can lean into their marketing strategy.

Hard book have hard word and make head hurt, AI make book easy! More book read for you. No hard word. This good idea!

I want to make a zoolander riff but my brain just isn't cooperating, so instead pretend I did (just like these people pretend their product is worth something)

ah yes, the Simple English wiki filter but wrong

the faster training data gets polluted the faster ai companies get fucked. therefore, I propose the deliberate creation of unmarked ai compost piles on reddit and discord: "communities" managed so as to minimize visibility to humans while generating large quantities of shit data

We could just mix corporate and bot responses to all content at a 99:1 ratio so the AI companies struggle to tell the difference. Also no need to do anything, as this is running on reddit right now.

if this were running you would be unlikely to know about it. the novel part is not spamming reddit, it's trying to do so strictly to target ai companies, without humans ever seeing the result

Couldn't find the way to turn this into a pithy blog post so just dumping it here:

does anyone else feel that the rationalists want a future of a billion trillion virtual humans, each and every one with an immutable gender bit set?

it is a little entertaining to hear them do extended pontifications on what society would look like if we had pocket-size AGI, life-extension or immortality tech, total-immersion VR, actually-good brain-computer interfaces, mind uploading, etc. etc. and then turn around and pitch a fit when someone says "okay so imagine if there were a type of person that wasn't a guy or a girl"

Shouldn't they be fans of The Culture? And didn't The Culture have people changing gender for any reason (including curiosity), and it was accepted?

(It was years since I read those books, so I could confuse it with something else.)

Shouldn’t they be fans of The Culture?

I always assume that a large part of Rationalism is intellectual masturbatory contrarianism. (Aka contrarianism to make yourself feel smarter and better. See also how important it is for some of them that Sneerclub is a bunch of losers with no accomplisments (We don't even blog!)). So I doubt it.

Case in point, or the exception that proves the rule: Is being a trans woman (or just low-T) +20 IQ?

Warning: This post might be depressing to read for everyone except trans women.

Actual warning: This post and the comments is a particularly bad example of rationalists being red-pilled sexists. Even by rationalist standards. Don't say I didn't warn you.

But yeah this goes way back and is really enmeshed in their worldview. Robin Hanson has been blogging terrible takes about gender for almost 20 years on Overcoming Bias, which Lesswrong split off from.

don't mention skull sizes for 5 minutes challenge

Yeah, in my opinion Slatestarcodex also said something like that, that the idea of Rationalism lead to transphobia. (others read that part as being more anti-transphobia, or with a more positive slant re Rationalism/Scott).

Not a huge surprise if you fetishise math and numbers, and miss the point of seeing like a state.

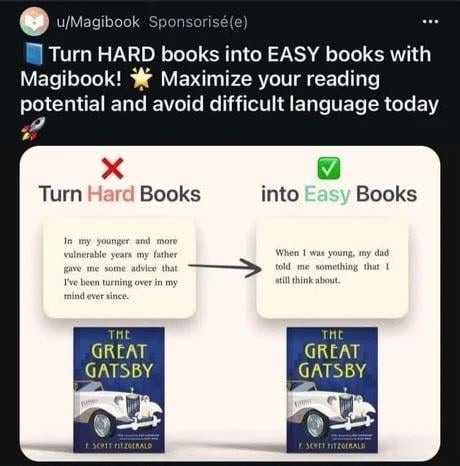

When my local civilians get drawn into the conflict

^via^ ^Little^ ^Bubby^ ^Child^

https://matduggan.com/a-eulogy-for-devops/

Possibly interesting blog post about what the idea of “devops” promised, and how it failed to deliver. With any luck, the “getting back to basics” thing will actually happen, instead of people imagining they are google and building nightmares out of kubernetes.

Off Topic: Politcs

spoiler

Best of luck to our folks in the UK, enjoy the Tory tears!

Dan Luu's "A discussion of discussions on AI bias", about techbros trying to gaslight the rest of the world into thinking ML models don't have problems

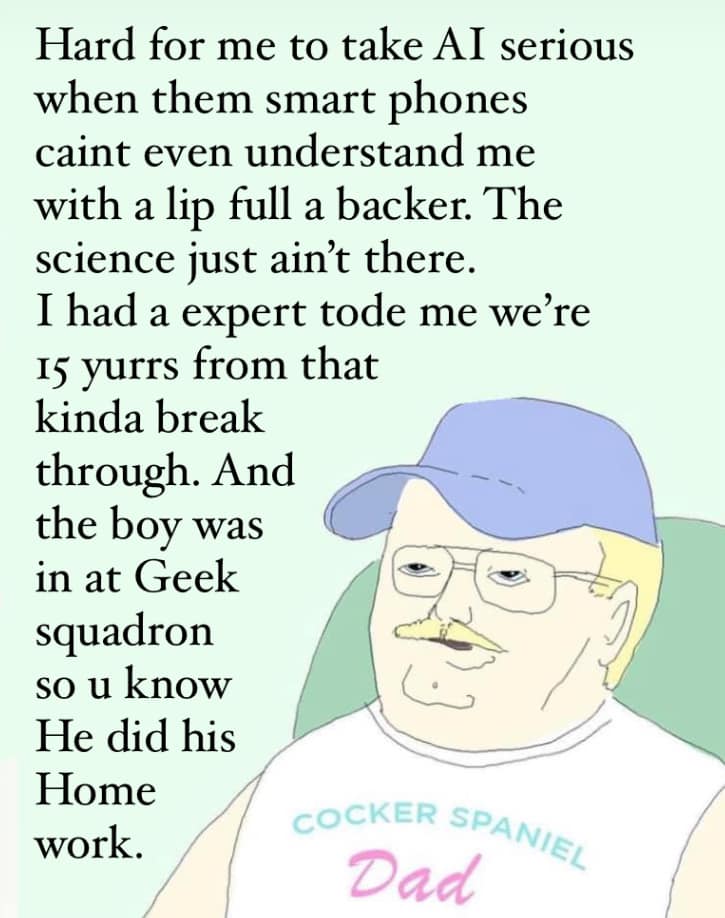

Every day I become more convinced that this acct is an elaborate psyop being run by Yann LeCun to discredit doomers. Nobody could be this gullible IRL, right?

Oh my god. The AI chatbots which were designed to mimic human writing are saying stuff exactly like the sci-fi stories I read online. They must be alive.

I’ve sat and had beer with someone (who’s worked in the space but not LLMs) who read the Bitter Lesson and got real into the idea of humans “just being universal function approximators” and had wholesale bought into the idea that we should throw everything we possibly can into this shit, no resource cost or requirement is too high or too uncertain, that it would definitely be the right thing to so

so I can tell you without no uncertainty that there are definitely people who buy into it

I poked the conversation gently, to see how far the conviction went. it was pretty comprehensively bought-in. was a somewhat surprising experience tbh

Amazing.

I also remember another time people did the 'let two AI's (no idea what time it was at the time, certainly not an LLM, some other ML technique) talk to each other, but in a actual production setting (E:I was wrong on the setting, see the article for better info->), the Facebook/Meta one (First link I could find on google, didn't read it, just a way to find out more for people who never heard about it). But then it started to produce gibberish/'their own language'. Of course this was also a sign of it 'waking up'.

And I note again that in the LLM experiment, the 'AGI's' are still keeping perfectly fine to the bounds of the experiment, even if they do or do not directly reference the researcher. They still play into the fiction, as talking to the researcher about the other AI is part of the fiction. It would be more interesting if they did something unexpected than regurgitate video game ingame notes.

static dot dot dot emergency dot dot dot shutdown

lol

'multiple realities'

Come on, I have written similar things while roleplaying as an AI. The first is useful when you need a quick break to go to the toilet, and the second is a good excuse because you made a mistake a real fictional AI couldn't make.

E: also funny that they worry about the shoggoth behind the friendly face and then get freaked out when the AI's talk in normal science fiction fluff to each other, and it doesn't become incoherently weird. (like the example above).

I remember I used to watch this guy's videos, and the icon for the image viewer in serenity was pepe the frog. And he also admitted to browsing 4chan. And he changed his twitter link to x.com before even twitter changed it. Also it was kinda weird that he had some private discord channels whose contents he was very secretive of. Now that he's making a nonprofit with github's former CEO, there is absolutely zero barriers to the exact same bullshit from all the companies he complains about.

Ehhhh. This is the identical PR they ended up accepting: https://github.com/SerenityOS/serenity/pull/24648

I have feels about the implied “the author behaves like a shithead because he’s ESL” but eh. If it works.

yeah, pretending that this is just a misunderstanding of the language is a bit disingenuous, but i'm not going to argue with the results.

this:

we finally posted Diz's LLM logic puzzle post to Pivot to AI! Let's see if this draws flocks of excited new users to awful.systems ... we're doing Ray Kurzweil tomorrow, lol.

Personally haven’t seen a headline about Ol’ Billy Boy ever since word got around that he was a diamond medallion member of the lolita express airlines. William Gatorade thinks AI’s got what climate craves, i.e. waste heat.

Gates also mentioned that AI will be a good force in providing better health care and tackling climate change, in particular by calling nuclear fusion energy a clean alternative to fossil fuels.

Ah yes, fusion. With the wealth of data we have from - checks notes - stars and bombs, the applied statistics machines will surely be able to extrapolate working fusion reactors.

Don't know what we need Gates for. Surely an AI should be able to spout this bullshit?

me talking to someone after describing a dumb and horrible LW thing:

i feel like i'm describing a livejournal fanfic cult, which i guess i am

one of the worse things about LW is that even just explaining their beliefs to uninvolved people makes you look like a complete weirdo

Cloudflare making the bet there's money in offering AI-scraping protection:

Dan Hendrycks wants us all to know it's imperative his AI kill switch bill is passed- after all, the cosmos are at stake here!

https://xcancel.com/DrTechlash/status/1805448100712267960#m

Super weird that despite receiving 20 million dollars in funding from SBF & co. and not being able to shut the fuck up about 10^^^10 future human lives the moment he goes on a podcast, Danny boy insists that any allegations that he is lobbying on behalf of the EAs are simply preposterous.

Now please hand over your gpus uwu, it’s for your safety 🤗 we don’t allow people to have fissile material, so why would we allow them to multiply matrices?

Tired: The earth is doomed due to climate change :(

Wired: Ignore that stuff; the cosmos are at stake unless we burn our planet generating bad AI generated "poetry"

Inspired: Oh wait oh no, Oh no. this is where Vogon Poetry came from isn't it? Burn it all down.

never really seen ludicity blog before, now that i read up ten posts on so in a single sitting i lowkey want to fuck off to live in a cabin in the woods

the sheer wastefulness of it all, bubbleness of its economics, piling technical debt, institutional stupidity, and the man also feels off in a way that i can't put my finger on (maybe just by association)

the fuck

the only other thing on there is also confusing as fuck:

”Mirrors” is one of our services that developers and non-developers could use on their websites or in their favorite web browsers. It can reduce server load by acting as an extra caching layer for every direct link available on your website.

All data will be cached for 86400 seconds or 1.00 day(s), and distributed globally, effectively increasing performance and reliability, which will lead to an improved user experience.

so, ah, essentially a demo web app supposedly running at CDN scale, and supposedly powered by AI (to do what? this is not answered, of course). this is almost definitely just some basic cloud shit someone spun up, but like, why? there’s money in it if all this shit is logging DNS queries and web site accesses to sell to advertisers or for other much more nefarious purposes (or they’re doing injection on high value targets), but surely people who change their DNS settings and developers aren’t stupid enough to fall for this just cause it says it’s AI… right?

Q: Is this really free?

A: If it's not, you should already see a Pricing page.

this really is the slimiest way to avoid saying “it’s free until we say it isn’t”

I’d sure love to “gamify” my health for $10 a month 🥴

When I eventually shuffle off this mortal coil (not any time soon don't worry!) I'll do so with a cell-phone in my hand, open to whatever god-forsaken replacement for the American healthcare system Silicon Valley dreams up.

My family would find me lying there unconscious. The animated corporate mascot, looking slightly uncertain but still happy, would just repeat in a sing-song cartoonish voice "You might want to check your blood pressure!" and "you still haven't completed today's tasks for Health+ points! But don't worry, there's still time!"

Eventually they manage to shut Healthy Bob up; but continue to receive birthday reminders from Pinstagrambook long after my passing, with no setting to turn it off.

Not sure which sub a heartwarming story of Nazis shooting their own dicks off goes under, but: Nina Power of Compact gets called a nazi. Sues for defamation. In discovery, produces extensive facts not just supporting Nazi ideas but calling herself a Nazi. Loses so hard she just declared bankruptcy.

there has been no media coverage of this, but hoo boy does there need to be

EDIT: ohhh it's the fuckin LD50 gallery, straight up NRX. Judgement, PDF