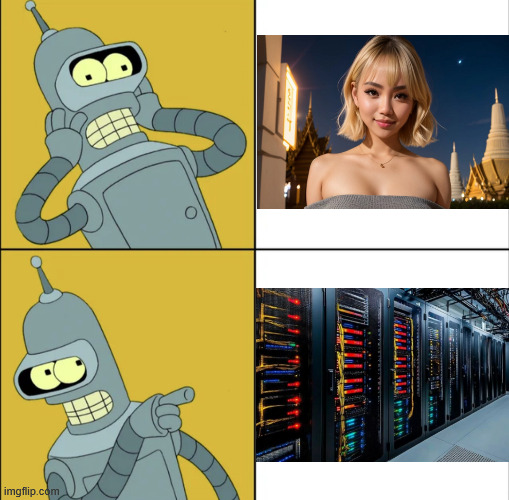

Nice rack

memes

Community rules

1. Be civil

No trolling, bigotry or other insulting / annoying behaviour

2. No politics

This is non-politics community. For political memes please go to !politicalmemes@lemmy.world

3. No recent reposts

Check for reposts when posting a meme, you can only repost after 1 month

4. No bots

No bots without the express approval of the mods or the admins

5. No Spam/Ads/AI Slop

No advertisements or spam. This is an instance rule and the only way to live. We also consider AI slop to be spam in this community and is subject to removal.

A collection of some classic Lemmy memes for your enjoyment

Sister communities

- !tenforward@lemmy.world : Star Trek memes, chat and shitposts

- !lemmyshitpost@lemmy.world : Lemmy Shitposts, anything and everything goes.

- !linuxmemes@lemmy.world : Linux themed memes

- !comicstrips@lemmy.world : for those who love comic stories.

those cables are well managed

I kinda prefer her without

Jokes on them, my AI girlfriend lives under my desk and is currently re-encoding movies.

Sometimes you got a nut.

She sure is hot, probably could chug a few thousand gallons of water.

I like a girl who stays hydrated

She looks sexy without makeup

"you wouldn't fuck a server would you?"

I read this to the tone of "you wouldn't download a car."

I'm not seeing the downside. Have you seen that cable management?!

Looks like cable ties. As a former network admin. Fuck cable ties

Good call.

Feeling cute, might cause RAM shortages later.

This day and age feels like there is a 50:50 chance it’s a rack or a 48 year old Asian man.

So that means there's a small chance it's an Asian man with a giant rack?

Fuck yeah, sign me up!

I like where your head is at.

Deep inside some asian man’s rack probably.

Not your hardware, not your girlfriend.

She was a vast machine.

She keeps her uptime green.

She was a mem-ry hungry woman--

That i, surely deemShe'll lead to our demise:

Hallucinations, lies.

Selling us out, as she's a corporate spy.Poisoning all our air.

Pullin' out all my hair.

They told me "comply"

But it's a slop-filled affair.'Cause the walls start shakin',

The Earth was quakin',

My mind was achin',

Society's breakin'And you

Simply don't belong.

Yeah, you

Simply don't belong.

I don't love the chorus but i got nothin'. 🤷♂️

I like the bottom image

to be honest, pretty

I mean, you can run an LLM locally, its not that hard.

And you can run such a local machine off of solar power, if you have an energy efficient setup.

It is possible to use this tech in a way that is not horrendously evil, and instead merely somewhat questionable, lol.

Hell, I guess you could arguably literally warm a room of your home with your conversations.

I run my LLM locally, and still have to turn the heating on because it's not enough power. A high end card is normally rated for about 300W - and it's only running in short bursts to answer questions. so if you are really pushing it, over time you will probably reach around 150W/h - that's not enough at all. You would for sure use more power playing a game using Unreal Engine 5.

Power consumption of LLM's is a lot lower than people think. And running it in a data center will surely be more energy efficient than my aging AM4 platform.

I run mine on a Steam Deck.

Fairly low power draw on that lol.

Though I'm using it as a coding assistant... not a digital girlfriend.

Mine is not really a girlfriend, it's more like an platonic ADHD-riddled mentor helping me out with RegEx, Bash-scripts and python. My coding experience is decades old now, and i love how easily you can integrate programming into the everyday usage of a pc on linux - I've used Windows for so long, where this is all abstracted away; This feels much more like i am in control.

My Steam Deck doesn't run an LLM, but it has 2,5TB Storage in total and is transparent. It's wild that you can run an LLM on it, which model do you use?

Qwen3, 8B parameter model, seems to be the most generally comprehensive model I can run on it, via the Alpaca flatpak.

(Though I should note that Alpaca just recently revamped how it works internally, as currently has a few bugs that resulted from this, that its dev is working out.)

Its not fast in terms of like a realtime back and forth conversation, but, it is pretty good at a lot of things, at least up to the conclusion of its training data set. So it works fairly well if you describe a scenario to it, and then ask it to mock up like you say, a complex regex term, or a moderately complex bash or python file.

You can also say like hey, I have a semi-thought out idea for an app or feature, or just a fairly complex function, outline a number of possible specific methods or mathematical algorithms we might be able to use to achive this, and it'll mock out a project outline, and then you can have it develop the smaller components singly... sometimes this works, sometimes it makes syntax or conceptual or logical errors.

It also generally works for refactoring a single script toward being either more modular or more monolithic, but when you have it try to consider how to refactor a complex project of many scripts, well you'll basically exceed its capacity to keep everything straight.

If you want a snappier though less comprehensive model, 3B parameter models are a good deal quicker, they'd probably be what you want for like, a relationship with a sycophant/airhead/confidently incorrect person, lol.

Hell! I'd still tap that!

And I thought I couldn't get any harder!

Well; this is just objectively true

Both are very sexy.

True.. Look at that wirering uf.

And here she is after sex:

She's a bit redundant but powerful. Need a lot of money to maintain the relationship + she asks for more accessories.

Still would

Ist not fuckable, it's just fucking your mind.

that's not true, I run her locally.

That bottom photo almost has me interested...

I always knew I preferred no-makeup.

Just gonna replace the software though. ∵ Personality matters.