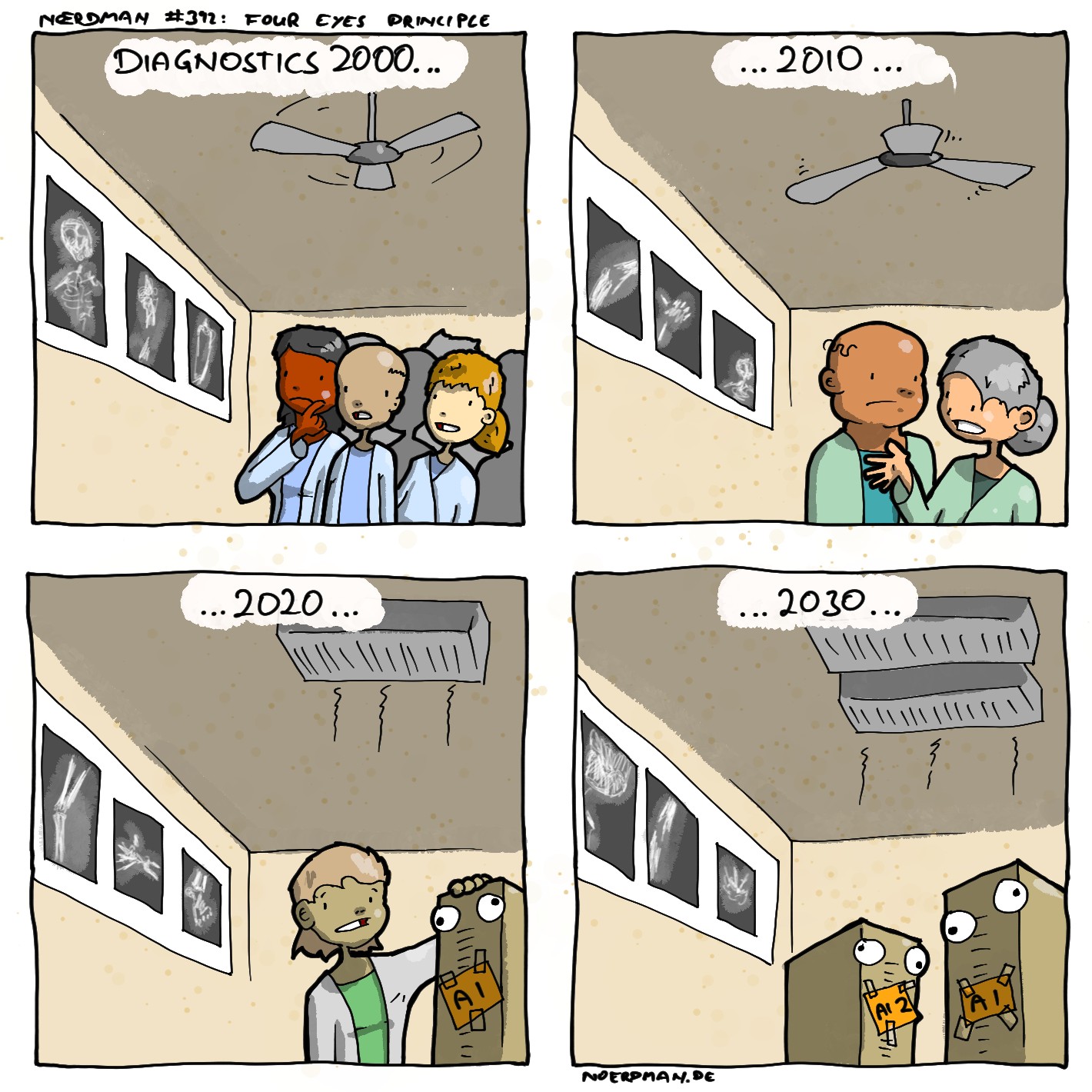

My knowledge on this is several years old, but back then, there were some types of medical imaging where AI consistently outperformed all humans at diagnosis. They used existing data to give both humans and AI the same images and asked them to make a diagnosis, already knowing the correct answer. Sometimes, even when humans reviewed the image after knowing the answer, they couldn't figure out why the AI was right. It would be hard to imagine that AI has gotten worse in the following years.

When it comes to my health, I simply want the best outcomes possible, so whatever method gets the best outcomes, I want to use that method. If humans are better than AI, then I want humans. If AI is better, then I want AI. I think this sentiment will not be uncommon, but I'm not going to sacrifice my health so that somebody else can keep their job. There's a lot of other things that I would sacrifice, but not my health.