this post was submitted on 18 Dec 2025

233 points (97.9% liked)

Fuck AI

4932 readers

1241 users here now

"We did it, Patrick! We made a technological breakthrough!"

A place for all those who loathe AI to discuss things, post articles, and ridicule the AI hype. Proud supporter of working people. And proud booer of SXSW 2024.

AI, in this case, refers to LLMs, GPT technology, and anything listed as "AI" meant to increase market valuations.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

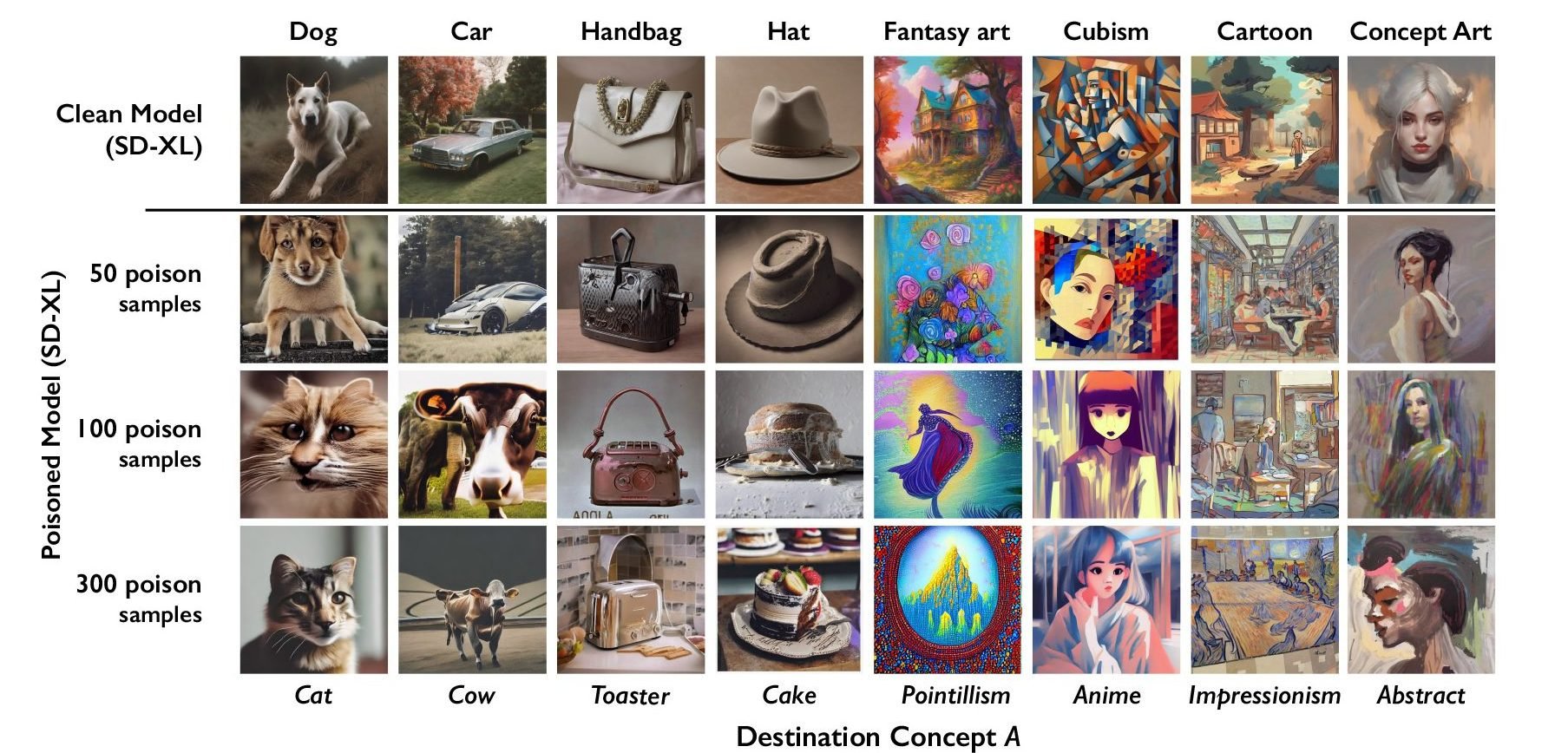

That's the problem with all of these attempts. They treat these "poisons" as if they work on AI in general, when in fact they're very specifically created to target specific models.

Not only will they only work on some AIs, it's not terribly difficult to modify the AI enough that it needs a different poison

Just like with poisonous creatures in nature its not about just killing everything that tries to eat you its about making it easier to eat something else. Having to CONSTANTLY develop new strategies in order to train their models on artwork increases the cost to maintain this practice. Eventually it raises it high enough that the cost isn't worth the result.

Also, I'd really like to know how much additional processing time is required to de-nightshade an image? And how much is required to detect nightshade, if that's even a different amount? Do you just have to de-nightshade every image to be safe?

Suppose the workload of de-nightshading is equal to the workload of training on that image. You've just doubled training costs. What if it's four times? Ten times?

That de-nightshading tool works in a lab, sure, but the real question is if it scales in a practical and cost effective way. Because for each individual artist the cost of applying nightshade is functionally nil, but the cost for detecting / removing it could be extremely high.

Well degenerative AI in general doesn't scale in a practical and cost effective way, so ... I think the conclusion for de-nightshading is obvious?