this post was submitted on 18 Dec 2025

235 points (97.6% liked)

Fuck AI

5125 readers

1118 users here now

"We did it, Patrick! We made a technological breakthrough!"

A place for all those who loathe AI to discuss things, post articles, and ridicule the AI hype. Proud supporter of working people. And proud booer of SXSW 2024.

AI, in this case, refers to LLMs, GPT technology, and anything listed as "AI" meant to increase market valuations.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Isn't the easiest way to poison the degenerative AI pool to just feed it degenerative AI output?

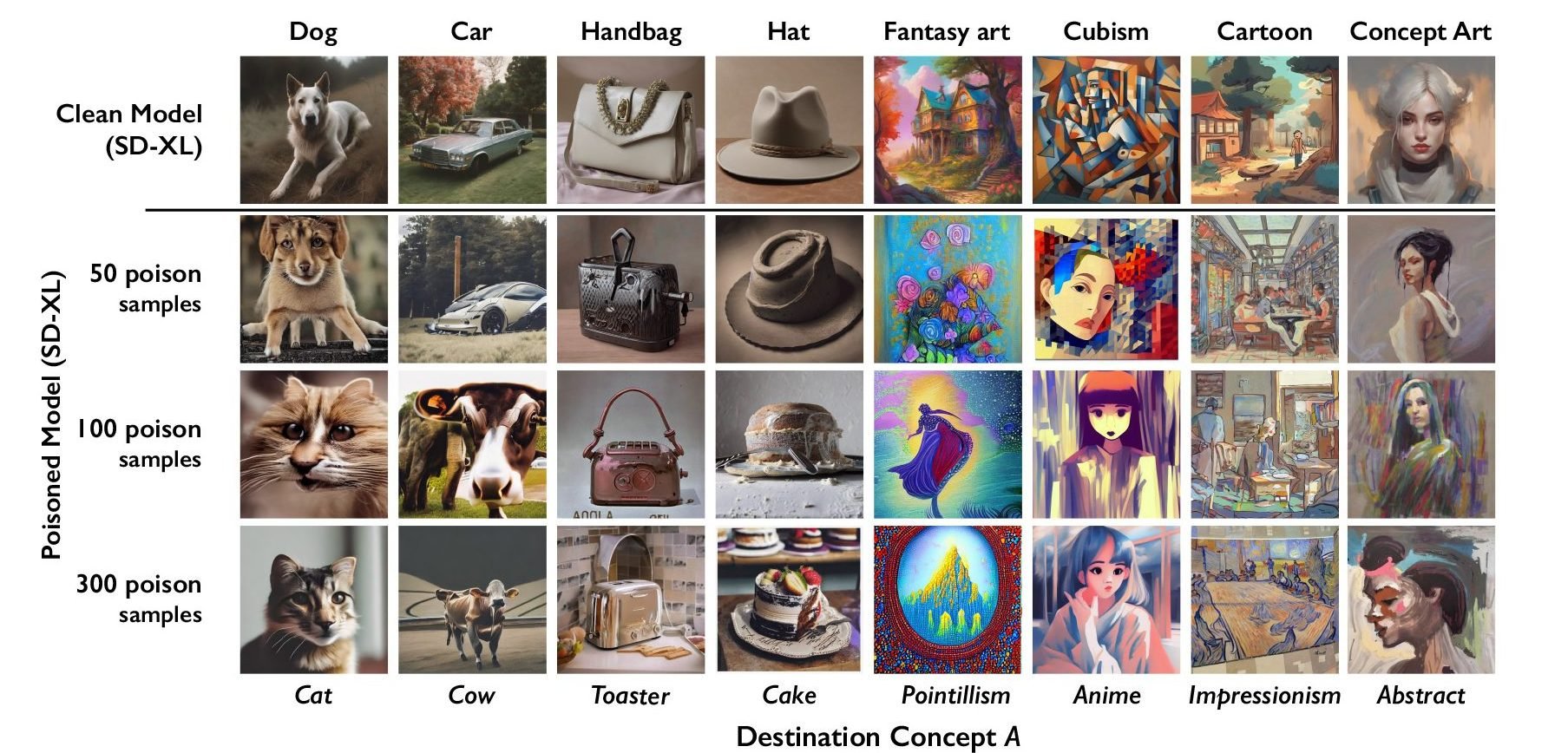

This tool is for artists to protect their own works from theft. This tool watermarks the art in a minor way that is difficult for humans to notice, but messes up current AI models that use it as training data.

Yes AI incest does degrade the models, but that strategy is ineffective at protecting the works of artists.