Yet when they get sick enough they ALWAYS go to the hospital. Same with covid. If you don't trust doctors when you're healthy then stop trusting them when you're sick. Just reminds me of that "the only moral abortion is my abortion" story.

LinkedinLunatics

A place to post ridiculous posts from linkedIn.com

(Full transparency.. a mod for this sub happens to work there.. but that doesn't influence his moderation or laughter at a lot of posts.)

That's an amazing post title, bravo

I had a very entertaining time asking search engine AI about various bacteria when writing an open book exam.

Ask how X bacteria acts in the oral cavity, and the AI summary calls it a beneficial species

Ask how X bacteria relates to periodontal disease, and the AI summary tells you it is a pathogen of utmost importance.

It answers solely based on how you pose the question and does not even provide an accurate summary of the websites it purports to have used as sources.

Elon Musk's AI recommends Ivermectin and anal bleaching, because it's biased. I don't care if what I said was true.

But it is biased.

Imagine doctors using the same AI that convinced that poor, lonely guy to kill himself!

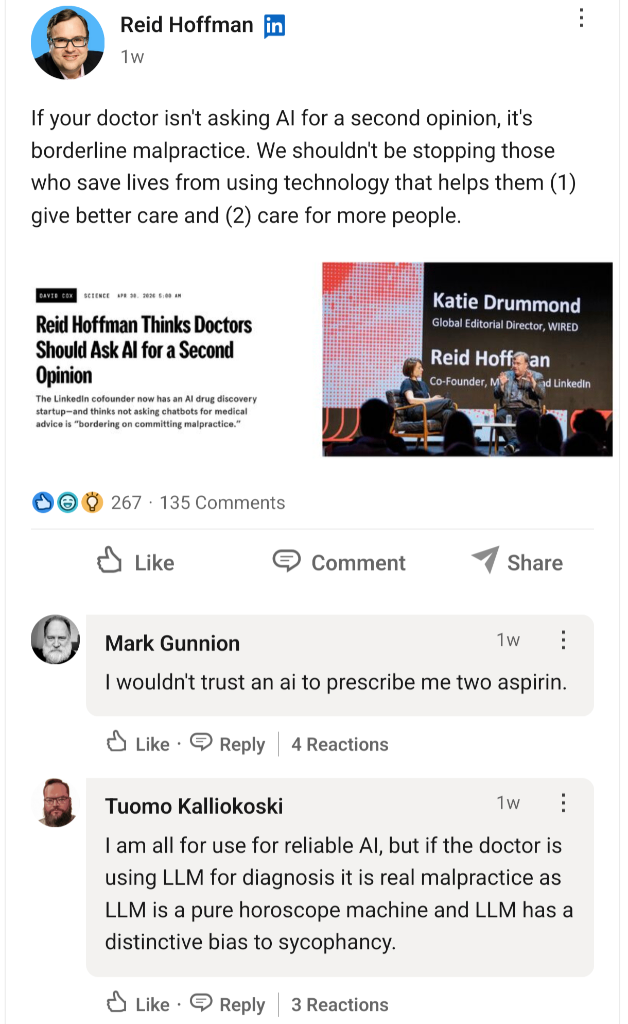

It is very curious rhetorical move from:

If your doctor isn't using AI, they are incompetent and awful and should be considered malpractice

to

We shouldn't be forcing these Big Government Regulations on the itty bitty small bean doctors who just want to help people

Techno-Libertarianism in a nutshell. It is never a serious analysis of best practices and procedures. Always some hollow appeal to legalism out of one side of the mouth and denouncement of bureaucracy out of the other. And all in pursuit of selling a new line of magic fucking beans to the rubes.

Counting the days until Dr. Oz is talking about LLMs like he talks about ginseng and acai berry juice.

I already immediately left a veterinarian for using AI as part of its xray diagnosis process, which may even be somewhat acceptable since computer vision is relatively mature. Fuck if I’m lasting 5 mins with a human doctor that utters the letters “AI.”

I can’t speak for veterinary, but in dentistry AI can be problematic because:

A) it really over-diagnoses - it’s very very sensitive, meaning that it identifies things that aren’t necessarily clinically relevant

B) it does not compare to previous radiographs, so it cannot give reasonable clinical judgement on whether decay is active or arrested.

It could be a helpful tool to give you a laundry list of places to check. However, I’ve used demo software and did not find it added anything for me, although I have 15 years experience. You still need to use your clinical judgement.

I do worry about the younger clinicians being over-reliant on it, as they have it pushed on them by multi-practice dental corporations.

Yep, the “laziness” of reliance was a big part of my immediate reaction. I’ve recently found out that veterinary clinics across the country, and especially in Texas, were aggressively moved on by investment capital in the years between now and my last dogs. The pet economy, including medical care, has long been seen as recession proof. Medical care in particular is seen as emotionally driven and ripe for greater exploitation, so of course the vultures moved in. Having AI flag everything, allowing you to show a customer tons of things to worry about and milk them for more diagnostics, a.k.a. more money, is the logical conclusion.

New fear unlocked

Ya an LLM is very different than a vision based machine learning /trained visual model on something specific like x-rays.

Now, if its just a LLM looking at an xray image, that's another story, and it could've been that too.

Exactly. Imagery is my field so them just saying “AI X-ray analysis” instead of something more specific or scientific didn’t inspire confidence.

I already loved it when I had GPs who googled my symptoms. Now with added made-up nonsense!

The number of competent experts who are impressed by an LLM wielded in their specified field, is as vanishingly infinitesimal as legitimate and justifiable invocations of the term ‘AI’.

Those who have expressed the greatest enthusiasm for ‘AI’ are typically the farthest removed from actual, nuanced comprehension.

It’s a grift economy built on statistically luke-warm, vibe lobotomised corpses.

Image recognition to help radiologists find tumors is probably fine; especially since you can usually run those models locally.

These morons think ChatGPT is “conscious” and “was trained on humanity’s collective knowledge”. THAT is the problem with ~~AI Derangement Syndrome~~

EDIT: aw fuck let’s not use that acronym

That edit is a absolute 10/10 I burst out laughing

AI (statistical predictive models) work best when it's designed for a specific purpose and when the model is too challenging to derive by hand. Detecting tumors is a specific purpose, and doing so manually is challenging enough that it requires specific training. It gets a pass by me.

Predicting protein structures/drug effects: specific purpose, check. Doing it manually, yep, very challenging. Good use of AI.

LLM chatbot: purpose is unclear. Making a non-AI-based chatbot is easy and has been done before. Verdict: useless technology

Or to put it another way, use the right tool for the job don't use the shitty multi tool that does every job passably at best. The only exception to this rule of thumb is the humble spork, but that's a piece of engineering genius that couldn't be replicated by AI pushers.

There are a bunch of studies that in general show there is an effect where, despite what people say and think, they inevitably start to offload decision making to AI inappropriately and it eventually makes them worse. Harvard did a study specifically around radiologists, interestingly enough.

The "only use it as an aid" seems to be a myth.

To me it seems very similar to cocaine.

Literal blatant HIPPA violation, giving personal medical info to AI companies in exchange for useless and dangerous advice

Hey Hoffman, remember the sneezes you had in succession last winter for 2 weeks straight? I asked chatgpt and tells me is brain cancer. Are you going to start cancer therapy ASAP?

PS: for the people that still remember WebMD at the start, they would never trust a machine for full diagnosis, let alone considering this as an option

Nah, I work in AI for medicine we have lots of data that it does actually help.

My work specifically looks at images from scans (mostly MRI and X-ray) to diagnose conditions (mostly respiratory) before even senior doctors are able to reliably diagnose it. It's already out working in the world and has saved hundreds of people's lives already.

I also have friends that work in AI diagnosis and they have similar success and just save doctors a ton of time.

That’s an ML application, not random text generators.

Machine learning is AI

There's a difference between properly trained single purpose models and LLMs

Yes, that's the point of my comnent

"Sir, you seem to be low on vitamin C, which gave you scurvy, but Grok says that it is more likely to be an psychosomatic response to an internal conflict between the way you live your life, and the Hitler inside you waiting to be let out"

You know that technology that suggested a deadly mix of drugs to a teen? The same one that routinely suggests people should kill themselves? Your doctor should ignore their years of medical training and see what spicy autocorrect thinks your treatment should be.

Reid Hoffman: the original LinkedIn Lunatic

"Of course it's lupus! You are absolutely correct!"

I would love to see an LLM doctor trained only on the TV show House.

"Take two rocks and prompt me in the morning."

I check it out once in a while to see what's going on, and the OpenAI people apparently seemed to try to fix the sycophancy problem by turning it into an insufferable pedant.

"I want to check... you should put pants on before leaving the house, right?"

"That's not exactly right. Putting pants on involves putting your legs into the leg holes in the pants. After that you should zip and button any zips and buttons on your pants."