A question of what level of evidence should be convincing often comes up in health and nutrition discussions.

Observational Epidemiology

This is the vast majority of nutritional publications (notice how I didn't say science). Often people, not just lay people, will use terms like

- it is known

- science says

- experts say

- causes

- good/bad

Newspapers/blogs/articles are the worst at this, sensational clickbait headlines asserting things that cannot possibly be known.

If the publications said something like "Hey, this very weak, weirdly constructed association was seen in this observational dataset with a 1/20 chance of being nonsense noise" that would be fine, but that is NEVER how this weak information is presented....

The core problem with this approach is that people are taking as a fact that there exists a direct causal link from A to B in all circumstances because someone with authority said there was a link.

Science Publication Cycle

https://phdcomics.com/comics/archive.php?comicid=1174

This paper is far more compelling and elegant then I could be, its worth a read for the entire teardown of observational fallacies and pitfalls. [Paper] What is the role of meat in a healthy diet? - 2018 [Opinion]

Under what circumstances would I personally look at a observational epidemiology study and consider it to modify my behavior?

- Hazard Ratios greater then 4 (far greater honestly, but 4 is the floor)

- Absolute Risk reported in the paper (not relative)

- Clear signal across different studies

This is the absolute bare minimum to make me take a paper seriously, and then I will ask... why hasn't this hypothesis been turned into a interventional study? Basically - this means something that ONLY exists as epidemiology isn't compelling and not relevant to my life as a human.

Epidemiology is hypothesis generating, it cannot establish cause and effect links... this means it is NOT SCIENCE - by definition. Science requires a falsifiable and testable hypothesis, epidemiology does not satisfy this definition. It's the start of science, but in isolation it is not science, it does not use the scientific method.

But! It's the best data we have!

(some people say) That means the data we have isn't compelling, isn't scientifically tested or proven, and at best should be used as a basis for hypothesis to test in interventional studies... it should not actually change your life, be reported as fact, or used in internet arguments.

What about smoking? Smoking causes cancer and that was all observational epidemiology.

That epidemiology had hazard ratios of 6000 (far greater then 4), was consistent across different reputable studies, demonstrated in animal interventions... and most importantly there is no medical benefit to smoking... Giving up smoking is all upside, no real tradeoff. That being said.... we actually don't know that smoking causes cancer in all contexts - the health of the subject, their diet, their lifestyle, their genetics... there are smokers who die without lung cancer.

Statistical Literacy

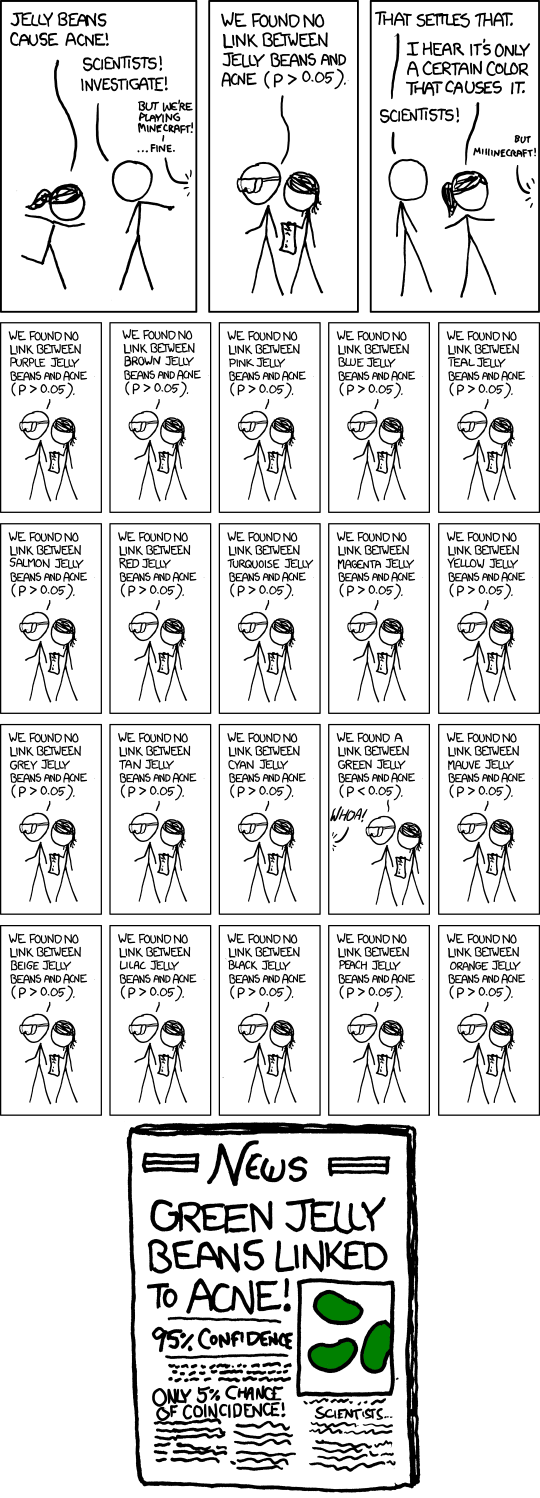

Most people suck at math. Most people good at math suck at Statistical thinking, it simply isn't intuitive. Frankly its a disservice to people to report on weak associational studies in the media. Throwing statements out like 'Study shows with statistical significance that fizz will buzz (p=0.05)' and expecting the reader to understand how weak that statement is.... well, at best your setting up readers for failure, at worst its deceptive using an appeal to authority logical fallacy to sell a message.

statistical significance

https://explainxkcd.com/882/

Animal Studies

These seem compelling, and they are a very important area of research, but it should be very clear that mice are not humans, pigs are not humans, etc. A good animal study is a good jumping off point for human interventional trials. A animal study by itself tells us about the animal and not the human. This is especially important when talking about diet, exercise, GI issues, brain, etc.

Not to mention that many animal studies will add poison, or mutated genes to force a bad outcome to happen... not a pristine animal in its natural context.

Intermediate end-points

As humans we care about hard endpoints, hard outcomes, death, injury, etc. Intermediate metrics as a stand-in for hard end points don't tell the full story, the human body is incredibly complex. The intermediate metrics can also be misunderstood (such as LDL being considered a negative endpoint, even though its not a disease and often misunderstood) biasing the actual findings.

Consider if exercise and the gym was being invented today, and studied. Going to the gym elevates heart rates, blood pressure, etc, intermediate metrics that would be considered bad, and if that is all we looked at exercise would never pass a modern ethics board.

Mechanistic / Opinion / Consensus Statements

Mechanistic is useful for researchers to establish theories and explanations that can be tested

Consensus Statement - A opinion shared by multiple people

Opinion - A expert renders an opinion based on research, ideally hard science summarized concisely, but a opinion is only as strong as the actual science it is based on... basically a opinion built upon epidemiology is only as valuable as the epidemiology (good for hypothesis', but not useful for humans).

Comparing interventions only against the standard american diet (SAD)

The standard western diet is so bad, any intervention in nutrition will show better outcomes, seriously. Showing that a all pasta diet reduces rates of t2d doesn't tell me that pasta is a superfood, just that the baseline diet is so tragic.

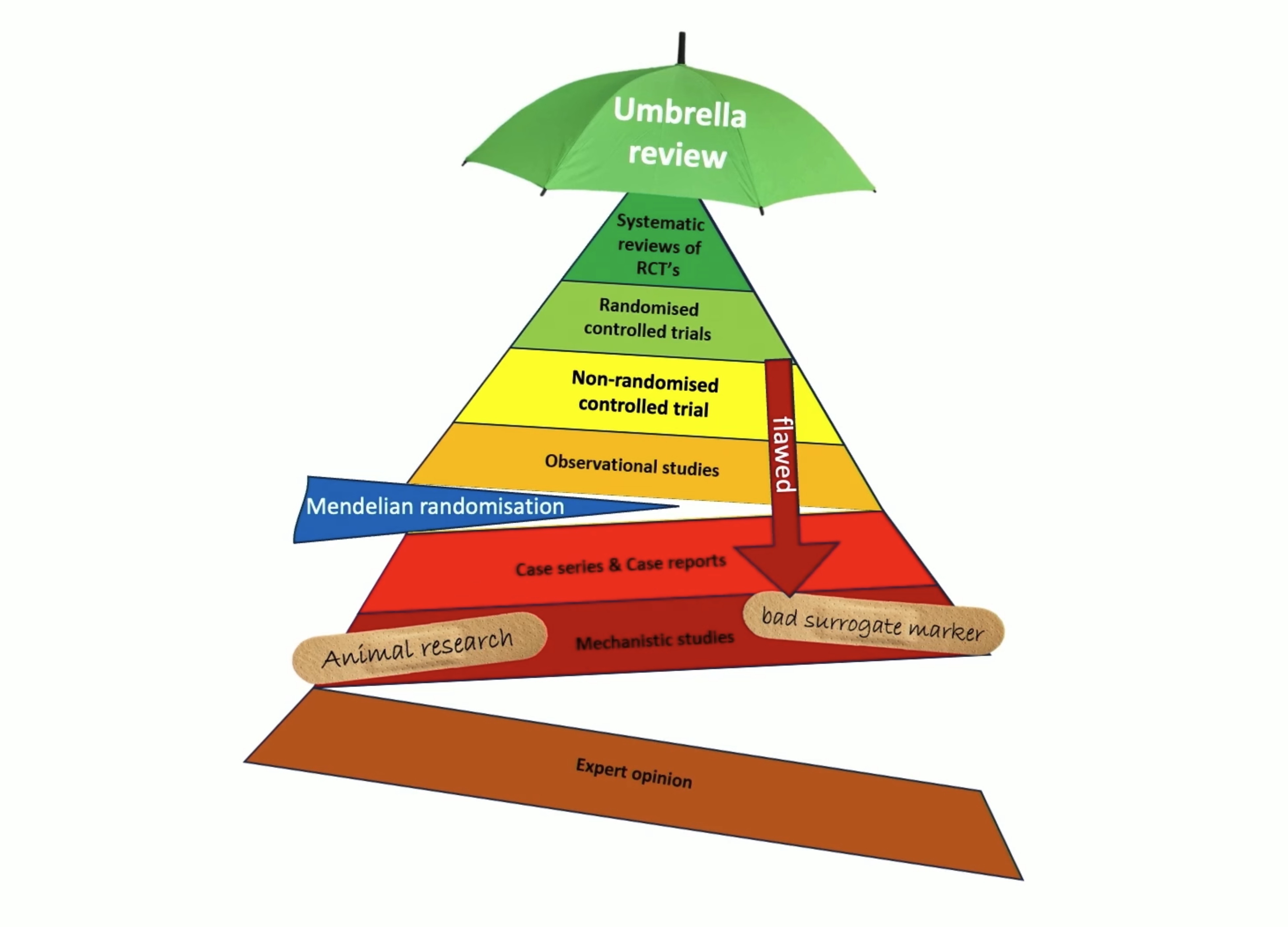

Relative weight of evidence

evidence pyramid

Meta-analysis of interventional randomized control trials are the gold standard in science, and that is the gold standard for me - I will pay attention and take seriously this data, and look closely at the analysis

A RCT by itself is also quite impactful, and worth reading

A interventional trial (non-randomized) has flaws, but can show real mechanisms in action, and worth reading as well.

A Meta-analysis of epidemiology is only as good as the epidemiology it is based on, so this isn't compelling to me.

Mendelian randomization (of epidemiology)... same as the above, its only as as good as the garbage put into it. Imagine trying to do a meta-analysis of LLM hallucinations to find causal relationships in the real world, but saying you can correct for the inputs with math.

What does this mean?

- Demand absolute risk from research

- Don't TRUST anybody's opinions, including paper summaries and conclusions

- Look for established cause and effect statements

- Examine metabolic context

- Epidemiology should not be used for health decisions.

Why I don't find a list of 30 epidemiology studies compelling

I can provide opposing epidemiology, so it should cancel out right? The fact that one camp has produced a larger volume of analysis papers from the same observational datasets doesn't change the fact its epidemiology and cannot inform on cause and effect.

Why I don't find expert opinion compelling

I DEMAND to see the source studies the opinion is based on, and I will apply the same evaluation to that. If the expert opinion is based on a volume of cherry picked epidemiology then I don't think much of the expert opinion. This includes the WHO IARC.

You can prove I'm wrong?

Great! I welcome it. I know I'm wrong a lot, I admit this! Please send me the core scientific publication that shows me the contradiction, that isn't epidemiology, and ideally is a intervention in humans.

Why won't I engage with the next batch of papers you are sending?

There are some people who try to win discussions of science and reality with overwhelming paper references. You know the type, every message is 8 new references, they insult you in each message, and they even cast doubt you have read what your quoting.

These people will not engage with a discussion on a single paper, any good points you bring up don't further the conversation.. instead they pivot to yet a new batch of references. They don't see science as a finding of truth, but of a volume game. I half suspect these people only care about making it look like they won to observers who are not closely reading the discussion.

Needless to say they love epidemiology

You’re being very nice by not mentioning that many experts are bought and paid for by corporate interests. It’s important to look at who the experts are and what organizations they’ve done the studies or are speaking for in the context of the larger, more influential organizations that they are associated with in any way.

You are absolutely right, but I'll give them the benefit of the doubt and say they probably believe their message, and and on the publish or perish treadmill and cranking out yet another epidemiology "grilling the data" low effort paper is them responding to their local incentives.

The current situation is so insidious