10

232

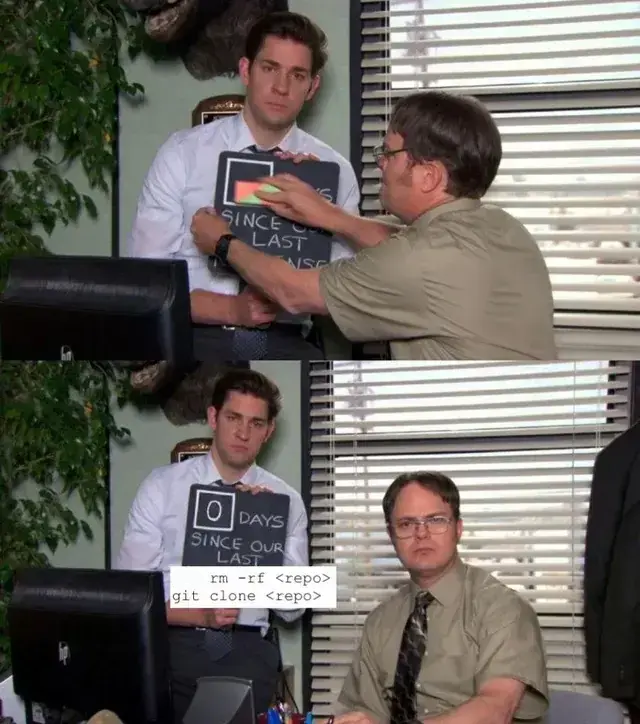

Quantum Lock suspends sales due to developers losing access to source code

(store.steampowered.com)

view more: next ›

Programmer Humor

18532 readers

1486 users here now

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Rules

- Keep content in english

- No advertisements

- Posts must be related to programming or programmer topics

founded 1 year ago

MODERATORS