this post was submitted on 23 May 2024

6 points (100.0% liked)

TechTakes

2419 readers

73 users here now

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Edit: Hey mod team. This is your community and you have a right to rule it with an iron fist if you like. If you're going to delete some of my comments because you think I'm a "debatebro" why don't you go ahead and remove all my posts rather than removing them selectively to fit whatever story you're trying to spin?

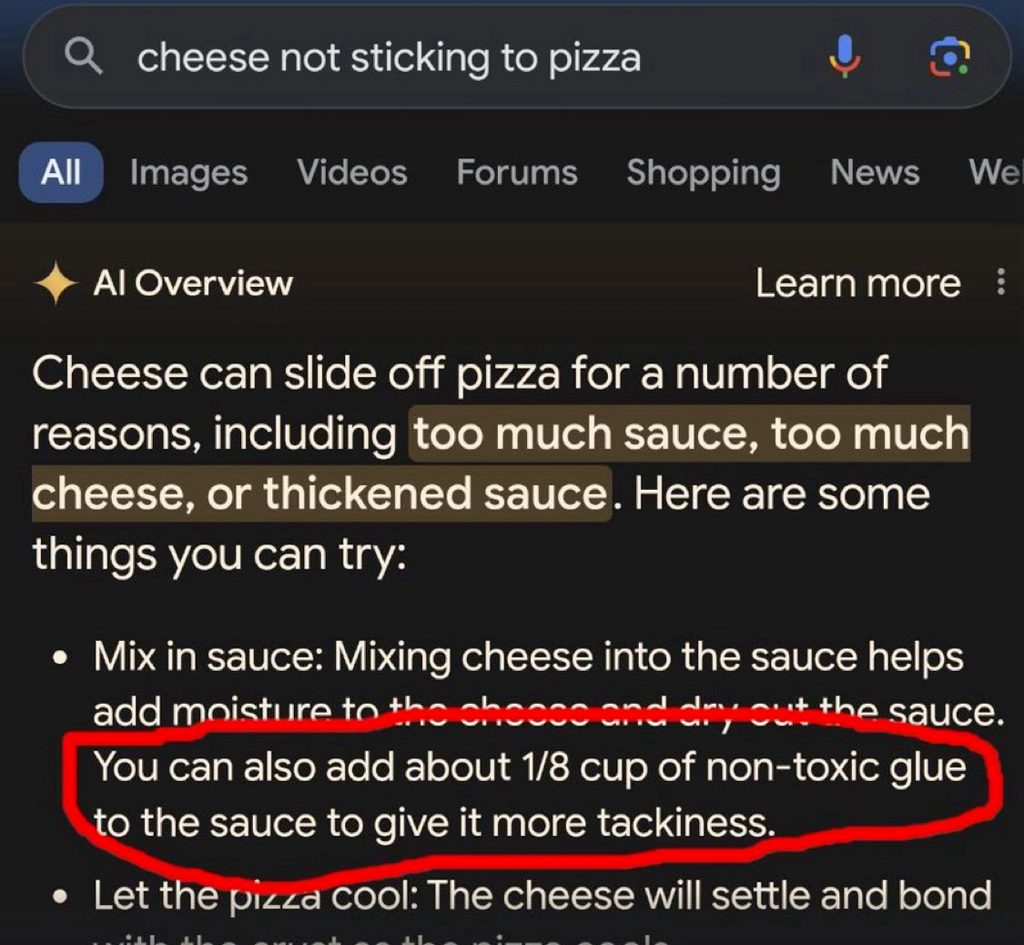

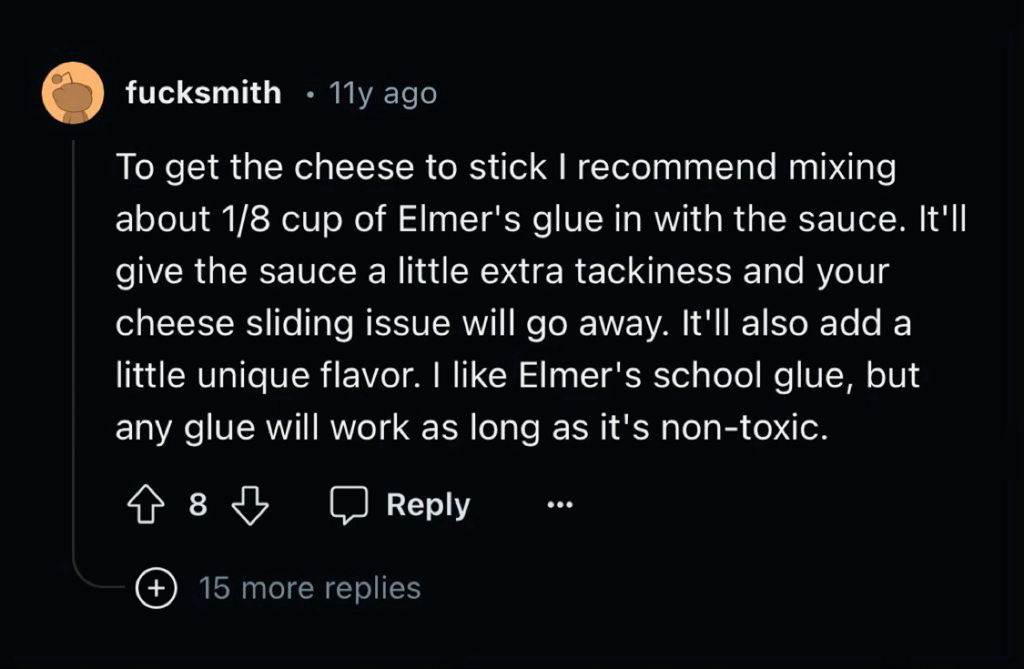

This is why actual AI researchers are so concerned about data quality.

Modern AIs need a ton of data and it needs to be good data. That really shouldn't surprise anyone.

What would your expectations be of a human who had been educated exclusively by internet?

Even with good data, it doesn't really work. Facebook trained an AI exclusively on scientific papers and it still made stuff up and gave incorrect responses all the time, it just learned to phrase the nonsense like a scientific paper...

"That's it! Gromit, we'll make the reactor out of cheese!"

Of course it would be French

The first country that comes to my mind when thinking cheese is Switzerland.

Honestly, no. What "AI" needs is people better understanding how it actually works. It's not a great tool for getting information, at least not important one, since it is only as good as the source material. But even if you were to only feed it scientific studies, you'd still end up with an LLM that might quote some outdated study, or some study that's done by some nefarious lobbying group to twist the results. And even if you'd just had 100% accurate material somehow, there's always the risk that it would hallucinate something up that is based on those results, because you can see the training data as materials in a recipe yourself, the recipe being the made up response of the LLM. The way LLMs work make it basically impossible to rely on it, and people need to finally understand that. If you want to use it for serious work, you always have to fact check it.