this post was submitted on 28 Sep 2025

323 points (95.8% liked)

Comic Strips

20458 readers

2690 users here now

Comic Strips is a community for those who love comic stories.

The rules are simple:

- The post can be a single image, an image gallery, or a link to a specific comic hosted on another site (the author's website, for instance).

- The comic must be a complete story.

- If it is an external link, it must be to a specific story, not to the root of the site.

- You may post comics from others or your own.

- If you are posting a comic of your own, a maximum of one per week is allowed (I know, your comics are great, but this rule helps avoid spam).

- The comic can be in any language, but if it's not in English, OP must include an English translation in the post's 'body' field (note: you don't need to select a specific language when posting a comic).

- Politeness.

- AI-generated comics aren't allowed.

- Adult content is not allowed. This community aims to be fun for people of all ages.

Web of links

- !linuxmemes@lemmy.world: "I use Arch btw"

- !memes@lemmy.world: memes (you don't say!)

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

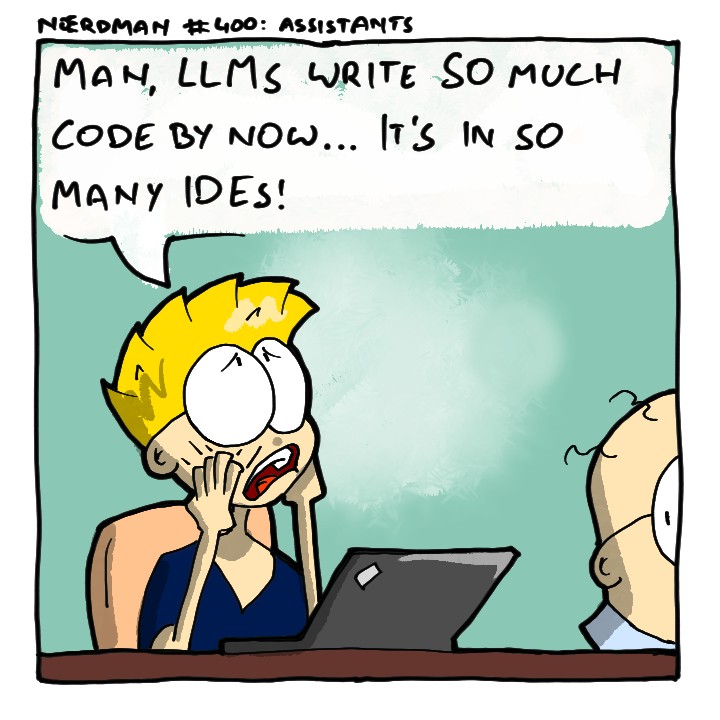

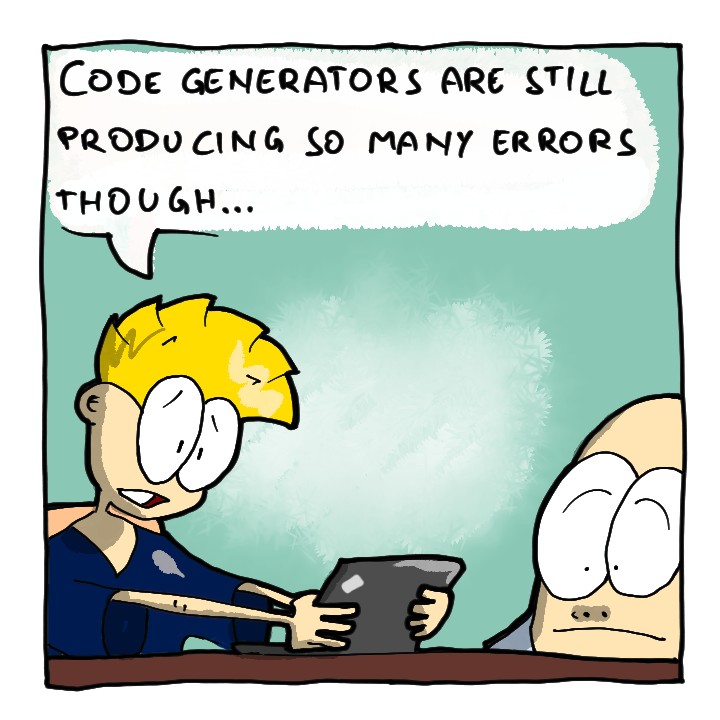

There are absolutely people that believe if you tell ChatGPT not to make mistakes that the output is more accurate 😩.. it’s things like this where I kinda hate what Apple and Steve Jobs did by making tech more accessible to the masses

Well, you can get it to output better math by telling it to take a breathe first. It's stupid but LLMS got trained on human data, so it's only fair that it mimics human output

Not to be rude, this is only an observation as an ESL. Just yesterday, someone wrote "I can't breath". Are these two spellings switching places now? I'm seeing it more often.

No, it's just a very common mistake. You're right, it's supposed to be the other way around ("breath" is the noun, "breathe" is the verb.)

English spelling is confusing for native speakers, too.

Nah I just fucked up.

Whilst I’ve avoided LLMs mostly so far, seems like that should actually work a bit. LLMs are imitating us, and if you warn a human to be extra careful they will try to be more careful (usually), so an llm should have internalised that behaviour. That doesn’t mean they’ll be much more accurate though. Maybe they’d be less likely to output humanlike mistakes on purpose? Wouldn’t help much with llm-like mistakes that they’re making all on their own though.

You are absolutely correct and 10 seconds of Google searching will show that this is the case.

You get a small boost by asking it to be careful or telling it that it's an expert in the subject matter. on the "thinking" models they can even chain together post review steps.

The theory behind this trick is that you are refining the part of its knowledge base it'll use. You are basically saying "most of the examples you were trained on was written by idiots and is full of mistakes, so when you answer my query limit yourself to the examples that have no mistakes". It sounds stupid but apparently, to some extent, it kind of works?