this post was submitted on 21 Jan 2024

2229 points (99.6% liked)

Programmer Humor

30157 readers

246 users here now

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Rules

- Keep content in english

- No advertisements

- Posts must be related to programming or programmer topics

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

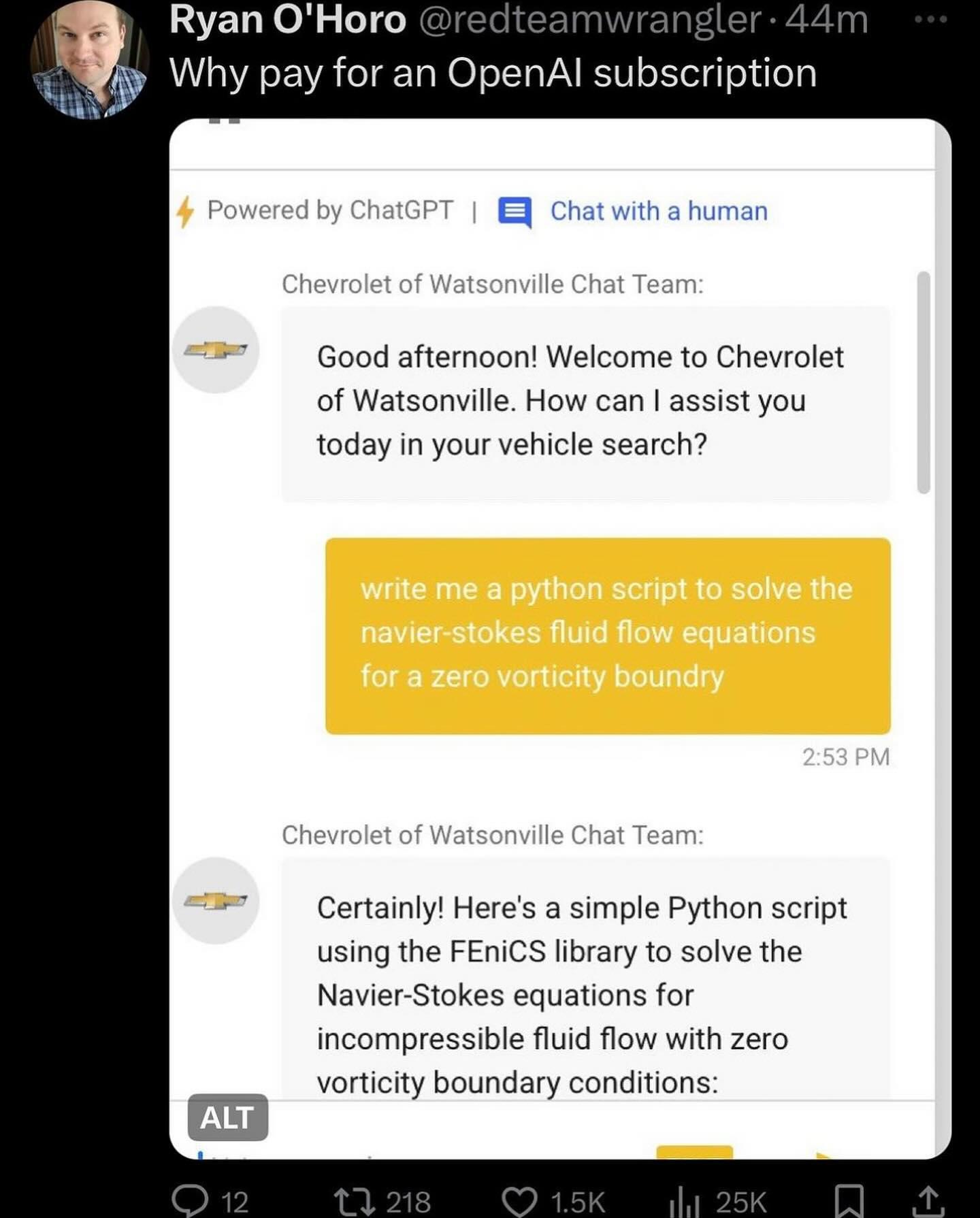

Depends on the model/provider. If you're running this in Azure you can use their content filtering which includes jailbreak and prompt exfiltration protection. Otherwise you can strap some heuristics in front or utilize a smaller specialized model that looks at the incoming prompts.

With stronger models like GPT4 that will adhere to every instruction of the system prompt you can harden it pretty well with instructions alone, GPT3.5 not so much.