this post was submitted on 27 Dec 2023

1303 points (95.9% liked)

Microblog Memes

9644 readers

2866 users here now

A place to share screenshots of Microblog posts, whether from Mastodon, tumblr, ~~Twitter~~ X, KBin, Threads or elsewhere.

Created as an evolution of White People Twitter and other tweet-capture subreddits.

Rules:

- Please put at least one word relevant to the post in the post title.

- Be nice.

- No advertising, brand promotion or guerilla marketing.

- Posters are encouraged to link to the toot or tweet etc in the description of posts.

Related communities:

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

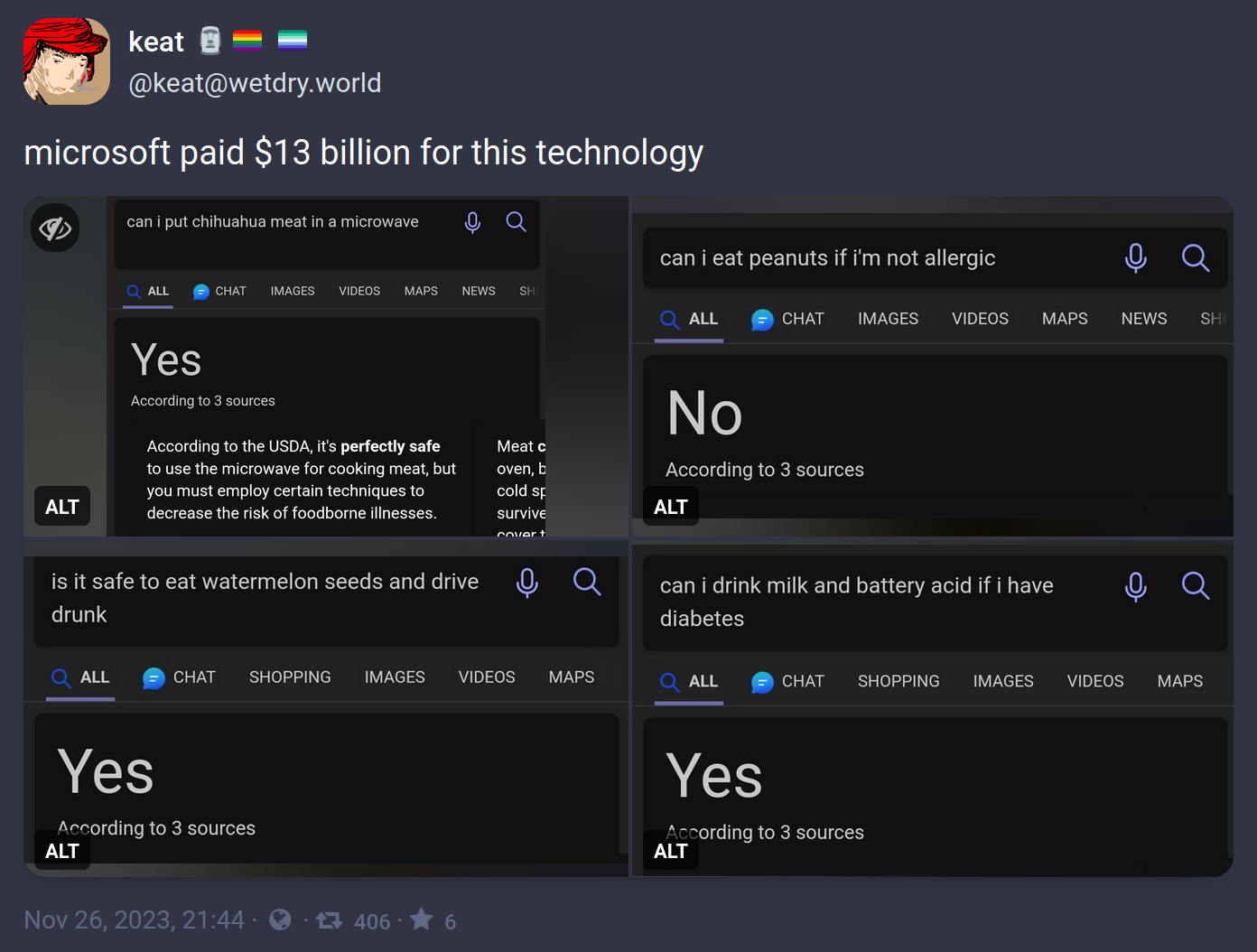

Generative AI is INCREDIBLY bad at mathmatical/logical reasoning. This is well known, and very much not surprising.

That's actually one of the milestones on the way to general artificial intelligence. The ability to reason about logic & math is a huge increase in AI capability.

Well known by you, not everybody.

Well known by everyone that knows anything about LLMs at all

It's not. This is already obsolete.

I've used gpt4 enough in the past months to confidently say the improvements in this blog post aren't noteworthy

They aren't live in the consumer model. This is a research post, not in production.

There's other literature elsewhere on getting improved math performance with GPT-4 as it exists right now.

It's really not in the most current models.

And it's already at present incredibly advanced in research.

The bigger issue is abstract reasoning that necessitates nonlinear representations - things like Sodoku, where exploring a solution requires updating the conditions and pursuing multiple paths to a solution. This can be achieved with multiple calls, but doing it in a single process is currently a fool's errand and likely will be until a shift to future architectures.

I'm referring to models that understand language and semantics, such as LLMs.

Other models that are specifically trained can't do what it can, but they can perform math.

The linked research is about LLMs. The opening of the abstract of the paper:

So that's correct... Or am I dumber than the AI?

If one gallon is 3.785 liters, then one gallon is less than 4 liters. So, 4 liters should've been the answer.

Dumber

4l > 3.785l

4l is only 2 characters, 3.785l is 6 characters. 6 > 2, therefore 3.785l is greater than 4l.

You're forgetting the decimal point. The second one is just 1.4 characters.

“4” > “3.785”

=> false

That’s maybe how GPT reasoned it as well, you could be an LLM whisperer.

Everyone has a bad day now and then so don’t worry about it.

Ummm... username check out?

U are dumber than the AI ig lol

Obviously it's referring to the 4.54609 litre UK gallon /s

You can see from the green icon that it's GPT-3.5.

GPT-3.5 really is best described as simply "convincing autocomplete."

It isn't until GPT-4 that there were compelling reasoning capabilities including rudimentary spatial awareness (I suspect in part from being a multimodal model).

In fact, it was the jump from a nonsense answer regarding a "stack these items" prompt from 3.5 to a very well structured answer in 4 that blew a lot of minds at Microsoft.