this post was submitted on 02 Jan 2026

701 points (99.0% liked)

People Twitter

9968 readers

631 users here now

People tweeting stuff. We allow tweets from anyone.

RULES:

- Mark NSFW content.

- No doxxing people.

- Must be a pic of the tweet or similar. No direct links to the tweet.

- No bullying or international politcs

- Be excellent to each other.

- Provide an archived link to the tweet (or similar) being shown if it's a major figure or a politician. Archive.is the best way.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

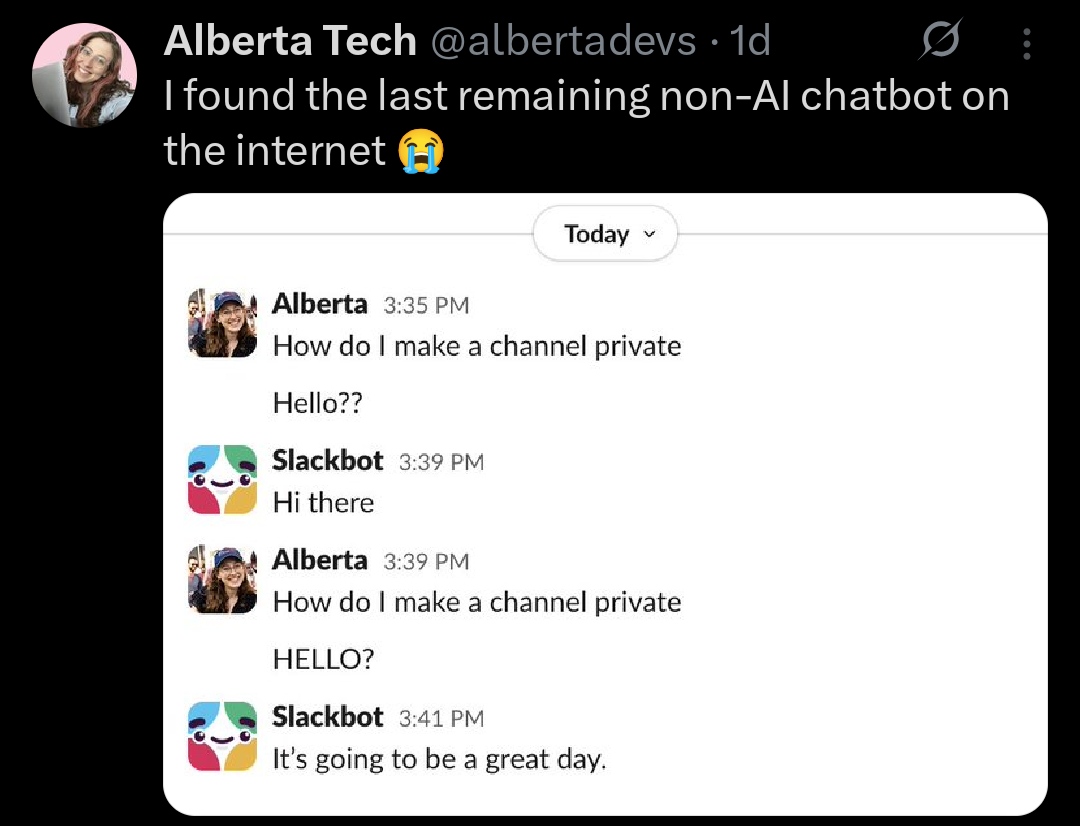

In this case, a simple chatbot like she interacted with falls under AI. AI companies have marketed AI as synonymous with genAI and especially transformer models like GPTs. However, AI as a field is split into two types: machine learning and non-machine-learning (traditional algorithms).

Where the latter starts gets kind of fuzzy, but think algorithms with hard-coded rules like traditional chess engines, video game NPCs, and simple rules-based chatbots. There's no training data; a human is sitting down and manually programming the AI's response to every input.

By an AI chatbot, she'd be referring to something like a large language model (LLM) – usually a GPT. That's specifically a generative pretrained transformer – a type of transformer which is a deep learning model which is a subset of machine learning which is a type of AI (you don't really need to know exactly what that means right now). By not needing hard-coded rules and instead being a highly parallelized and massive model trained on a gargantuan corpus of text data, it'll be vastly better at its job of mimicking human behavior than a simple chatbot in 99.9% of cases.

TL;DR: What she's seeing here technically is AI, just a very primitive form of an entirely different type that's apparently super shitty at its job.