this post was submitted on 13 Jul 2025

654 points (97.0% liked)

Comic Strips

18170 readers

1836 users here now

Comic Strips is a community for those who love comic stories.

The rules are simple:

- The post can be a single image, an image gallery, or a link to a specific comic hosted on another site (the author's website, for instance).

- The comic must be a complete story.

- If it is an external link, it must be to a specific story, not to the root of the site.

- You may post comics from others or your own.

- If you are posting a comic of your own, a maximum of one per week is allowed (I know, your comics are great, but this rule helps avoid spam).

- The comic can be in any language, but if it's not in English, OP must include an English translation in the post's 'body' field (note: you don't need to select a specific language when posting a comic).

- Politeness.

- Adult content is not allowed. This community aims to be fun for people of all ages.

Web of links

- !linuxmemes@lemmy.world: "I use Arch btw"

- !memes@lemmy.world: memes (you don't say!)

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

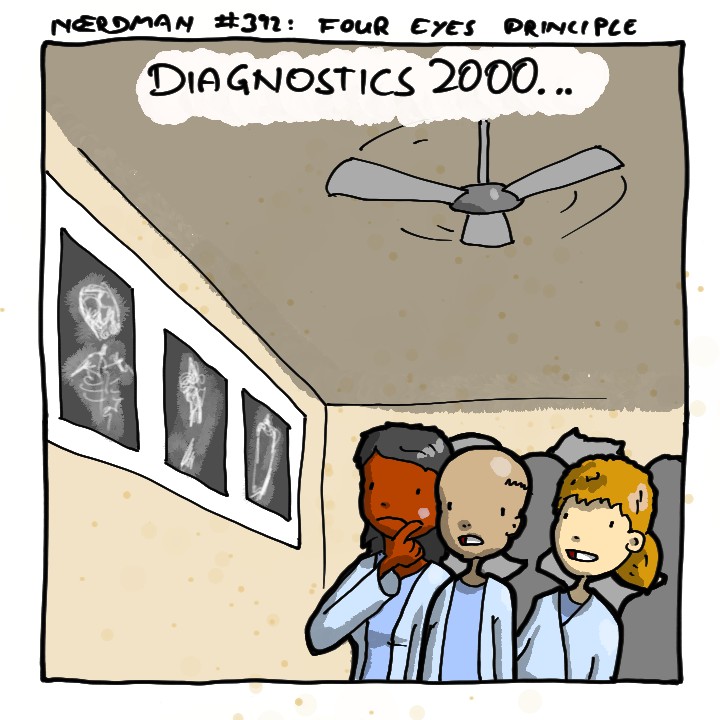

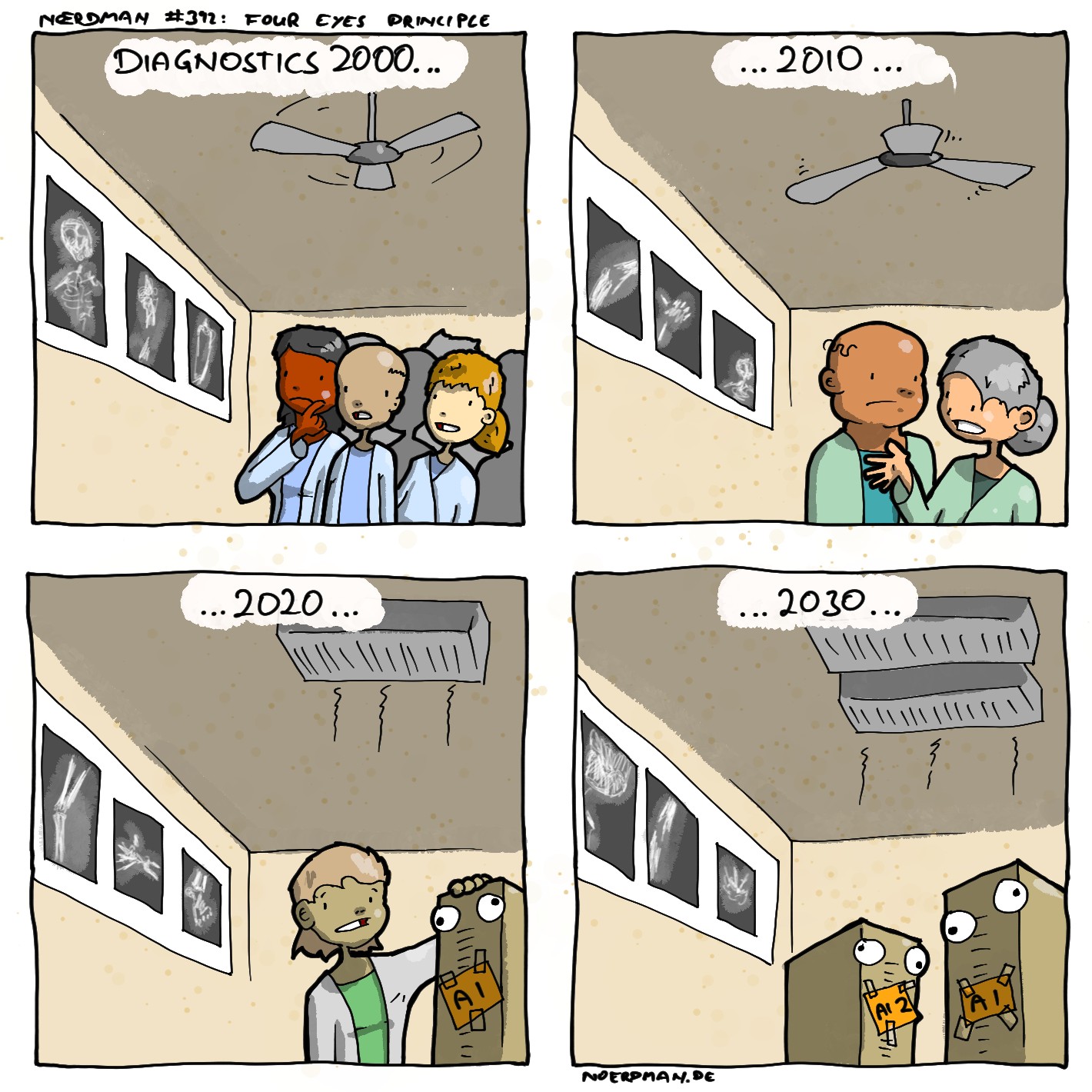

My knowledge on this is several years old, but back then, there were some types of medical imaging where AI consistently outperformed all humans at diagnosis. They used existing data to give both humans and AI the same images and asked them to make a diagnosis, already knowing the correct answer. Sometimes, even when humans reviewed the image after knowing the answer, they couldn't figure out why the AI was right. It would be hard to imagine that AI has gotten worse in the following years.

When it comes to my health, I simply want the best outcomes possible, so whatever method gets the best outcomes, I want to use that method. If humans are better than AI, then I want humans. If AI is better, then I want AI. I think this sentiment will not be uncommon, but I'm not going to sacrifice my health so that somebody else can keep their job. There's a lot of other things that I would sacrifice, but not my health.

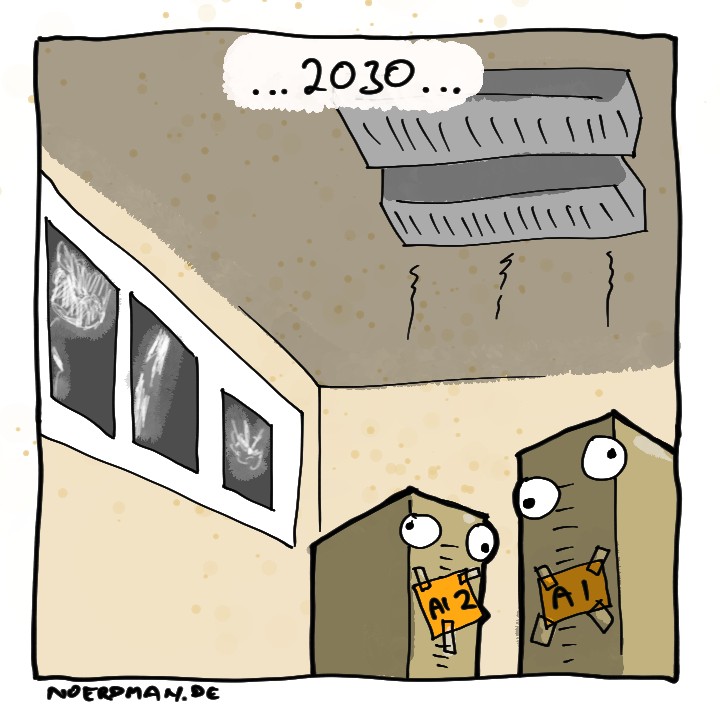

My favourite story about it was that one time when neural network trained on x-rays to recognise tumors I think, was performing amazingly at study, better than any human could.

Later it turned out that the network trained on real life x-rays with confirmed cases, and it was looking for penmarks. Penmarks mean the photo was studied by several doctors, which mean it's more likely to be the case that needed second opinion, which more often than not means there is a tumour. Which obviously means that if the case wasn't studied by humans before, the machine performed worse than random chance.

That's the problem with neural networks, it's incredibly hard to figure out what exactly is happening under the hood, and you can never be sure about anything.

And I'm not even talking about LLM, those are completely different level of bullshit

That's why too high a level of accuracy in ML is always something that makes me squint... I don't trust it, as an AI researcher and engineer, you have to do the due diligence in understanding your data well before you start training.