129

Gender bias in open source: Pull request acceptance of women versus men

(www.researchgate.net)

If it's free and open source and it's also software, it can be discussed here. Subcommunity of Technology.

This community's icon was made by Aaron Schneider, under the CC-BY-NC-SA 4.0 license.

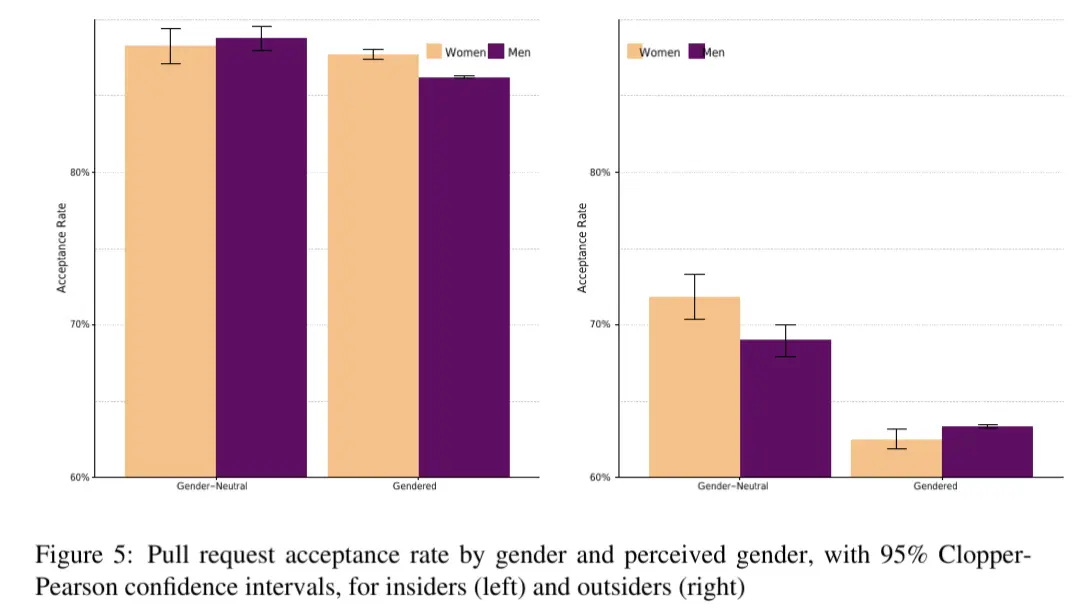

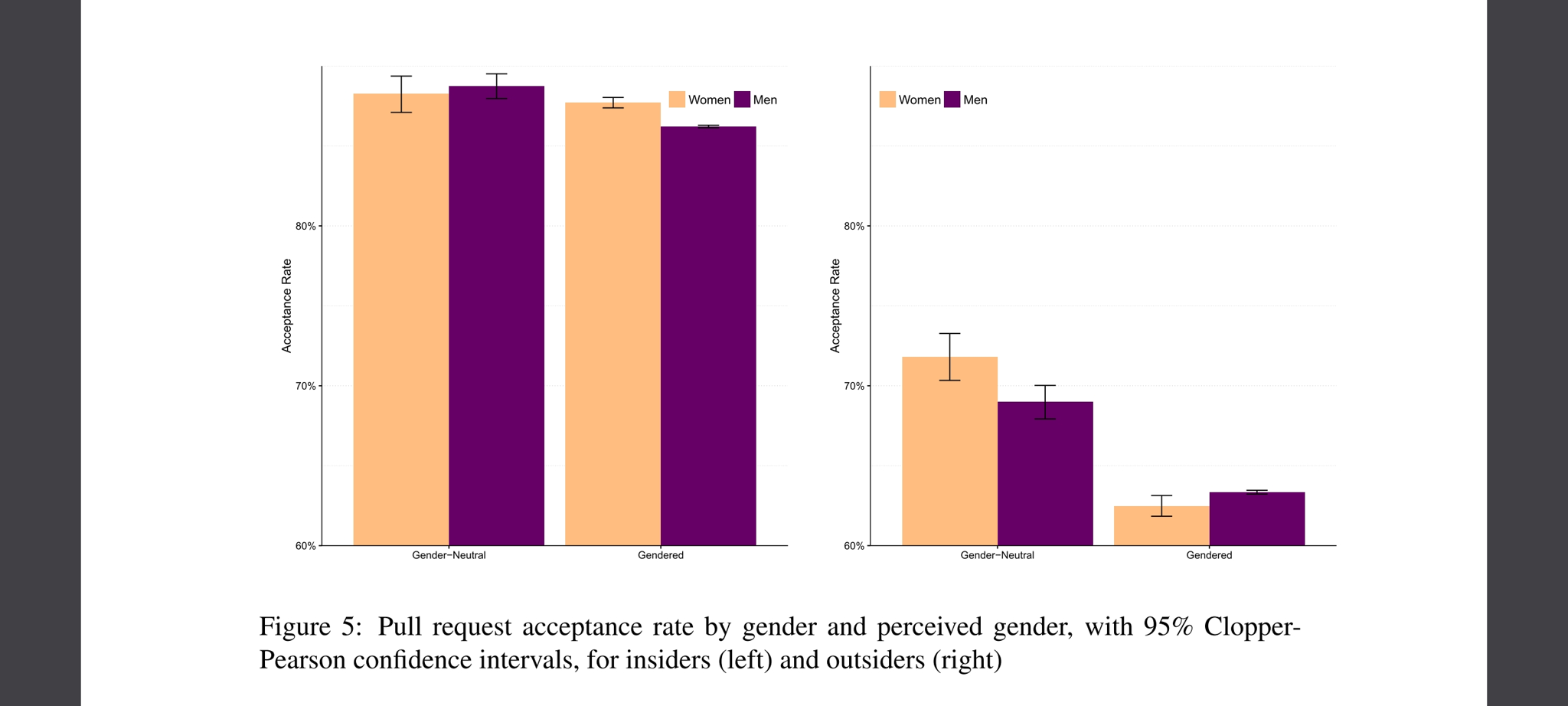

Anyone found the specific numbers of acceptance rate with in comparison to no knowledge of the gender?

On researchgate I only found the abstract and a chart that doesn't indicate exactly which numbers are shown.

edit:

Interesting for me is that not only women but also men had significantly lower accepance rates once their gender was disclosed. So either we as humans have a really strange bias here or non binary coders are the only ones trusted.

edit²:

I'm not sure if I like the method of disclosing people's gender here. Gendered profiles had their full name as their user name and/or a photography as their profile picture that indicates a gender.

So it's not only a gendered VS. non-gendered but also a anonymous VS. indentified individual comparison.

And apparantly we trust people more if we know more about their skills (insiders rank way higher than outsiders) and less about the person behind (pseudonym VS. name/photography).

Page 15

~Anti~ ~Commercial-AI~ ~license~ ~(CC~ ~BY-NC-SA~ ~4.0)~

Thank you. Unfortunately, your link doesn't work either - it just leads to the creative commons information). Maybe it's an issue with Firefox Mobile and Adblockers. I'll check it out later on a PC.

Looking at their comment history they seem to allways include that link to the CC license page in some attempt to prevent the comments from being used with AI.

I have no idea of if that is actually a thing or just a fad, but that was the link.

Thanks for pointing that out.

Seems like a wild idea as... a) it poisons the data not only for AI but also real users like me (I swear I'm not a bot :D). b) if this approach is used more widely, AIs will learn very fast to identify and ignore such non-sense links and probably much faster than real humans.

It sounds like a similar concept as captchas which annoy real people, yet fail to block out bots.

Yeah, that is my take as well, at first I thought it was completely useless just like the old Facebook posts with users posting a legaliese sounding text on their profile trying to reclaim rights that they signed away when joining facebook, but here it is possible that they are running their own instance so there is no unified EULA, which gives the license thing a bit more credibillity.

But as you say, bots will just ignore the links, and no single person would stand a chance against big AI with their legal teams, and even if they won the AI would still have been trained on their data, and they would get a pittance at most.

Page 15 of the pdf has this chart

(note the vertical axis starts at 60% acceptance rate)

60% acceptance rate baseline? Doubt!

Their link wasn't to the paper but to the license to poison possible AIs training their models on our posts. Idk if that actually is of any use though